HODL is bad for Bitcoin

Submitted by brad on Fri, 2018-04-06 12:34You've probably heard the catchword in the bitcoin/crytpocurrency world of "HODL!" Based on somebody's typo, it is an encouragement to hold on to your bitcoins rather than sell them as the price ramps up to crazy levels. If you're a true believer, you will HODL. Don't cave in to the temptation and pressure to sell (SLEL?) but be sure to HODL. (Previously I wrote about the issues which occur should Bitcoin's price actually stabilize.

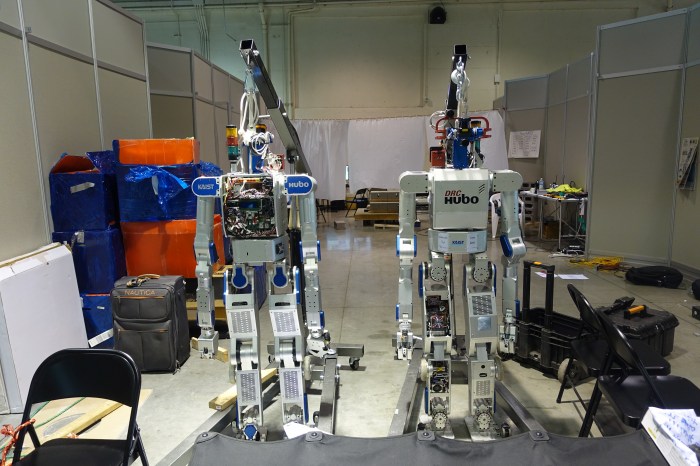

I go to CES first to see the

I go to CES first to see the  Of course, for the social site to aggregate and use this data for its own purposes would be a gross violation of many important privacy principles. But social networks don't actually do (too many) things; instead they provide tools for their users to do things. As such, while Facebook should not attempt to detect and use political data about its users, it could give tools to its users that let them select subsets of their friends, based only on information that those friends overtly shared. On Facebook, you can enter the query, "My friends who like Donald Trump" and it will show you that list. They could also let you ask "My Friends who match me politically" if they wanted to provide that capability.

Of course, for the social site to aggregate and use this data for its own purposes would be a gross violation of many important privacy principles. But social networks don't actually do (too many) things; instead they provide tools for their users to do things. As such, while Facebook should not attempt to detect and use political data about its users, it could give tools to its users that let them select subsets of their friends, based only on information that those friends overtly shared. On Facebook, you can enter the query, "My friends who like Donald Trump" and it will show you that list. They could also let you ask "My Friends who match me politically" if they wanted to provide that capability.