NHTSA/SAE's "levels" of robocars may be contributing to highway deaths

The NHTSA/SAE "levels" of robocars are not just incorrect. I now believe they are contributing to an attitude towards their "level 2" autopilots that plays a small, but real role in the recent Tesla fatalities.

Readers of this blog will know I have been critical of the NHTSA/SAE "levels" taxonomy for robocars since it was announced. My criticisms have mostly involved simply viewing them as incorrect or misleading, and you might have enjoyed my satire of the levels which questions the wisdom of defining the robocar based on the role the human being plays in driving it.

Recent events lead me to go further. I believe a case can be made that this levels are holding the industry back, and have a possible minor role in the traffic fatalities we have seen with Tesla autopilot. As such I urge the levels be renounced by NHTSA and the SAE and replaced by something better.

Some history

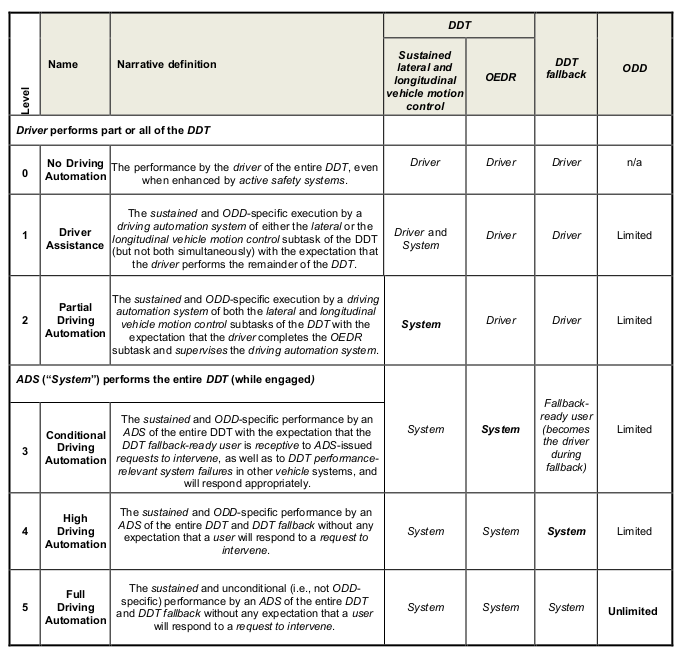

It's true that in the early days, when Google was effectively the only company doing work on a full self-driving car for the roads, people were looking for some sort of taxonomy to describe the different types of potential cars. NHTSA's first article laid one out as a series of levels numbered 0 to 4 which gave the appearance of an evolutionary progression.

Problem was, none of those stages existed. Even Google didn't know what it wanted to build, and my most important contribution there probably was being one of those pushing it from the highway car with occasional human driving to the limited area urban taxi. Anthony Levandowski first wanted the highway car because it was the easiest thing to build and he's always been eager to get out into reality as soon as possible.

The models were just ideas, and I don't think the authors at NHTSA knew how much they would be carving them into public and industry thinking by releasing the idea of levels in an official document. They may not have known that once in people's minds, they would affect product development, and also change ideas about regulation. To regulate something you must define it, and this was the only definition coming from government.

The central error of the levels was threefold. First, it defined vehicles according to the role the human being played in their operation. (In my satire, I compare that to how the "horseless carriage" was first defined by the role the horse played, or didn't play in it.)

Second, by giving numbered levels and showing a chart to the future, it advanced the prediction that the levels were a progression. That they would be built in order, each level building on the work of the ones before it.

Worst of all, it cast into stone the guess that the driver assist (ADAS) technologies were closely related to, and the foundation of the robocar technologies. That they were just different levels of the same core idea.

(The ADAS technologies are things like adaptive cruise control, lanekeeping, forward collision avoidance, blindspot warning, anti-lock brakes and everything else that helps in driving or alerts or corrects driver errors.)

There certainly wasn't consensus agreement on that guess. When Google pushed Nevada to pass the first robocar regulations, the car companies came forward during the comment phase to make one thing very clear -- this new law had nothing to do with them. This law was for crazy out-there projects like Google. The law specifically exempted all the the ADAS projects car companies had, including and up to things like the Tesla Autopilot, from being governed by the law or considered self-driving car technology.

Many in the car companies, whose specialty was ADAS, loved the progression idea. It meant that they were already on the right course. That the huge expertise they had built in ADAS was the right decision, and would give them a lead into the future. The levels embodied old-school conventional thinking.

Outside the car companies, the idea was disregarded. Almost all companies went directly to full robocar projects, barely using existing ADAS tools and hardware if at all. The exceptions were companies in the middle like Tesla and MobilEye who had feet in both camps.

SAE, in their 2nd version of their standard, partly at my urging, added language to say that the fact that the levels were numbered was not to be taken as an ordering. Very good, but not enough.

The reality

In spite of the levels, the first vehicle to get commercial deployment was the Navia (now Navya) which is a low speed shuttle with no user controls inside. What would be called a "level 4." Later, using entirely different technology, Tesla's Autopilot was the first commercial offering of "level 2." Recently, Audi has declared that given the constraints of operating in a traffic jam, they are selling "level 3." While nobody sold it, car companies demonstrated autopilot technologies going back to 2006, and of course prototype "level 4" cars completed the DARPA grand challenge in 2005 and urban challenge in 2007.

In other words, not a progression at all. DARPA's rules were so against human involvement that teams were not allowed to send any signal to their car other than "abort the race."

The confusion between the two broad technological areas has extended out to the public. People routinely think of Tesla's autopilot as actual self-driving car technology, or simply as primitive self-driving car technology, rather than as extra-smart ADAS.

Tesla's messaging points the public in both directions. On the one hand, Tesla's manuals and startup screen are very clear that the Autopilot is not a self-driving car. That it needs to be constantly watched, with the driver ready to take over at any time. In some areas, that's obvious to drivers -- the AutoPilot does not respond to stop signs or red lights, so anybody driving an urban street without looking would soon get in an accident. On the highway, though, it's better, and some would say too good. It can cruise around long enough without intervention to lull drivers into a false sense of security.

To prevent that, Tesla takes one basic measure -- it requires you to apply a little torque to the steering wheel every so often to indicate your hands are on it. Wait too long and you get a visual alert, wait longer and you get an audible alarm. This is the lowest level of driver attention monitoring out there. Some players have a camera actually watch the driver's eyes to make sure they are on the road most of the time.

At the same time, Tesla likes to talk up the hope their AutoPilot is a stepping stone. When you order your new Tesla, you can order AutoPilot and you can also pay $5,000 for "full self driving." It's mostly clear that they are two different things. When you order the full self driving package, you don't get it, because it doesn't exist. Rather you get some extra sensors in the car, and Tesla's promise that a new software release in the future will use those extra sensors to give you some form of full self driving. Elon Musk likes to made radically optimistic predictions of when Tesla will produce full robocars that can come to you empty or take you door to door.

Operating domain

NHTSA improved things in their later documents starting in 2016. In particular they clarified that it was very important to consider what roads and road conditions a robocar was rated to operate in. They called this the ODD (Operational Design Domain.) The SAE had made that clear earlier when they had added a "Level 5" to express that their Level 4 did not go everywhere. The Level 5 car that can go literally everywhere remains a science fiction goal for now -- nobody knows how to do it or even truly plans to do it, because there are diminishing economic returns to handling and certifying safety on absolutely all roads -- but it exists to remind people that the only meaningful level (4) does not go everywhere and is not science fiction. The 3rd level is effectively a car whose driving domain includes places where a human must take the controls to leave the domain at speed.

NHTSA unified their levels with the SAE a few years in, but they are still called the NHTSA levels by most.

The confusion

The recent fatalities involving Uber and Tesla have shown the level of confusion among the public is high. Indeed, there is even confusion within those with higher familiarity of the industry. It has required press comments from some of the robocar companies to remind people, "very tragic about that Tesla crash, but realize that was not a self-driving car." And indeed, there are still people in the industry who believe they will turn ADAS into robocars. I am not declaring them to be fools, but rather stating that we need people to be aware that is very far from a foregone conclusion.

Are the levels solely responsible for the confusion? Surely not -- a great deal of the blame can be lain in many places, including automakers who have been keen to being perceived as in the game even though their primary work is still in ADAS. Automakers were extremely frustrated back in 2010 when the press started writing that the true future of the car was in the hands of Google and other Silicon Valley companies, not with them. Many of them got to work on real robocar projects as well.

NHTSA and SAE's levels may not be to blame for the confusion, but they are to blame for not doing their best to counter it. They should renounce the levels and, if necessary, create a superior taxonomy which is both based on existing work and flexible enough to handle our lack of understanding of the future.

Robocars and ADAS should be declared two distinct things

While the world still hasn't settled on a term (and the government and SAE documents have gone through a few themselves. (Highly Automated Vehicles, Driving Automation for On-Road Vehicles, Automated Vehicles, Automated Driving Systems etc.) I will use my preferred term here (robocars) but understand they will probably come up with something of their own. (As long as it's not "driverless.")

The Driver Assist systems would include traditional ADAS, as well as autopilots. There is no issue of the human's role in this technology -- it is always present and alert. These systems have been unregulated and may remain so, though there might be investigation into technologies to assure the drivers are remaining alert.

The robocar systems might be divided up by their operating domains. While this domain will be a map of specific streets, for the purposes of a taxonomy, people will be interested in types of roads and conditions. A rough guess at some categories would be "Highway," "City-Fast" and "City-Slow." Highway would be classified as roads that do not allow pedestrians and/or cyclists. The division between fast and slow will change with time, but today it's probably at about 25mph. Delivery robots that run on roads will probably stick to the slow area. Subclassifications could include questions about the presence of snow, rain, crowds, animals and children.

What about old level 3?

What is called level 3 -- a robocar that needs a human on standby to take over in certain situations -- adds some complexity. This is a transitionary technology. It will only exist during the earliest phases of robocars as a "cheat" to get things going in places where the car's domain is so limited that it's force to transition to human control while moving on short but not urgent notice.

Many people (including Waymo) think this is a bad idea -- that it should never be made. It certainly should not be declared as one of the levels of a numbered progression. It is felt that a transition to human driving while moving at speed is a risky thing, exactly the sort of thing where failure is most common in other forms of automation.

Even so, car companies are building this, particularly for the traffic jam. While first visions of a car with a human on standby mostly talked about a highway car with the human handling exit ramps and construction zones, an easier and useful product is the traffic jam autopilot. This can drive safely with no human supervision in traffic jams. When the jam clears, the human needs to do the driving. This can be built without the need for takeover at speed, however. The takeover can be when stopped or at low speed, and if the human can't takeover, stopping is a reasonable option because the traffic was recently very slow.

Generally, however, these standby driver cars will be a footnote of history, and don't really deserve a place in the taxonomy. While all cars will have regions they don't drive, they will also all be capable of coming to a stop near the border of such regions, allowing the human to take control while slow or stopped, which is safe.

The public confusion slows things down

Tesla knows it does not have a robocar, and warns its drivers about this regularly, though they ignore it. Some of that inattention may come from those drivers imagining they have "almost a robocar." But even without that factor, the taxonomies create another problem. The public, told that the Tesla is just a lower level of robocar, sees the deaths of Tesla drivers as a sign that real robocar projects are more dangerous. The real projects do have dangers, but not the same dangers as the autopilots have. (Though clearly lack of driver attention is an issue both have on their plates.) A real robocar is not going to follow badly painted highway lines right into a crash barrier. They follow their maps, and the lane markers are just part of how they decide where they are and where to go.

But if the public says, "We need the government to slow down the robocar teams because of those Tesla crashes" or "I don't trust getting in the Waymo car because of the Tesla crashes" then we've done something very wrong.

(If the public says they are worried about the Waymo car because of the Uber crash, they have a more valid point, though those teams are also very different from one another.)

The Automated/Autonomous confusion

For decades, roboticists used the word "autonomous" to refers to robots that took actions and decisions without having to rely on an outside source (such as human guidance.) They never used it in the "self-ruling" sense it has politically, though that is the more common (but not only) definition in common parlance.

Unfortunately, one early figure in car experiments disliked that the roboticists' vocabulary didn't match his narrow view of the meaning of the word, and he pushed with moderate success for the academic and governmental communities to use the word "automated." To many people, unfortunately, "automated" means more simple levels of automation. Your dishwasher is automated. Your teller machine is automated. To the roboticist, the robocar is "autonomous" -- it can drive entirely without you. The autopilot is automated -- it needs human guidance while computers do some of the tasks.

I suspect that the public might better understand the difference if these words were split in these fashions. The Waymo car is autonomous, the Tesla automated. Everybody in robotics knows they don't use the world autonomous in the political sense. I expressed this in a joke many years ago, "A truly autonomous car is one that, if you tell it to take you to the office, says it would rather go to the beach instead." Nobody is building that car. Yet.

Are they completely disjoint?

I am not attempting to say that there are no commonalities between ADAS and robocars. In fact, as development of both technologies progresses, elements of each have slipped into the other, and will continue to do so. Robocars have always used radars created for ADAS work. Computer vision tools are being used in both systems. The small ultrasonic sensors for ADAS are used by some robocars for close in detection where their LIDARs don't see.

Even so, the difference is big enough to be qualitative and not, as numbered levels imply, quantitative. A robocar is not just an ADAS autopilot that is 10 times or 100 times or even 1,000 times better. It's such a large difference that it doesn't happen by evolutionary improvement but through different ways of thinking.

There are people who don't believe this, and Tesla is the most prominent of them.

As such, I am not declaring that the two goals are entirely disjoint, but rather that official taxonomies should declare them to be disjoint. Make sure that developers and the public know the difference and so modulate their expectations. It won't forbid the cross pollination between the efforts. It won't even stop those who disagree with all I have said from trying the evolutionary approach on their ADAS systems to create robocars.

Proposed revision

SAE should release a replacement for its levels, and NHTSA should endorse this or do the same.

In particular:

- Levels 0,1 and 2 should be removed to another document, and level 2 be renamed "Full Driver Assist" or "Full ADAS."

- Levels 3,4 and 5 should be replaced with a modest taxonomy of operating domains. What was level 3 would become an operating domain which must be exited while moving, ie. a human operator must be ready to take over at the boundary of the domain. Level 5 becomes an operating domain of "all paved roads" or similar.

- The removal of the ADAS levels should come with a pointer to the alternate document and an explicit retraction of the numbered levels, cautioning engineers and vendors to work to avoid confusion between driver assist technologies and autonomous driving technologies.

I say a modest taxonomy because in 2018 we still don't truly know what domains will make sense. We can make our guesses, but we should be clear about what's a guess and what isn't.

Likely factors defining an operational domain could include speed, isolation of the road from traffic (ie. limited access highways,) weather conditions, school zones etc.

Comments

Russell de Silva

Tue, 2018-04-17 19:13

Permalink

article on ars technica echoes your thought on levels

https://arstechnica.com/cars/2018/04/why-selling-full-self-driving-before-its-ready-could-backfire-for-tesla/

Essentially what you are saying, that it is not obvious that there is a progression from one stage to the next and that ADAS should be treated as cmopletely different in kind to self driving vehicles.

Comments seem to indicate agreement both with that and the progression via geo locked rollout using a taxi service.

David Chase

Fri, 2018-04-20 21:32

Permalink

Humans should be out of the loop

My main gripe with the assumption of human monitoring or assist is that it will tend to compromise some of the safety choices that we'd expect in a fully autonomous car. A full robocar will be safer because it will drive differently from a human, and not do unsafe things that humans actually like to do (principally driving too fast, but also tailgating and pretending not to see pedestrians just entering crosswalks). Because the car handles 100% of the driving, the human is free to do something else and not pay attention to the driving.

If a human must be sitting at the ready as a mostly backseat driver, however, then they must pay attention and cannot spend their time in other productive or amusing activities. They'll be impatient, second guess the robot-car, and be unhappy.

Underlying this is the assumption (backed up by years of experience driving and biking in traffic) that humans are pretty bad at safety when they're driving, yet don't realize it, so the usual model for how a robot car would drive is "like a human, only better at paying attention" as opposed to "like a human, but with all the stupid antisafety habits removed".

David Gelperin

Mon, 2018-04-30 15:38

Permalink

SAE Autonomous Control Levels Considered Harmful

In The Inmates are Running the Asylum, Alan Cooper describes programmers (engineers) as a different species (homologicus) that should never be allowed to design an interface.

The Society of Automotive Engineers (SAE) defines a five-level framework for autonomous vehicle control as:

1. Cruise control.

2. Traffic-sensitive cruise control for both steering and speed.

3. Self-driving, but with a human available to take over if needed.

4. No driver needed, under the right conditions.

5. No driver needed ever.

This is a reasonable framework, as long as you ignore human psychology. We have already witnessed the dangers of this framework at level 2. The better the autonomous performance, the worse a driver’s readiness to take over.

Consider a two-level framework for autonomous control:

1. Manual control with a cruise control option

2. Autonomous control in an approved context

In a recent blog post for Scientific American: "To Make Autonomous Vehicles Safe, We Have to Rethink "Autonomous" and "Safe"", Jesse Dunietz wrote:

“Situations where fully autonomous vehicles are poised to take over are much narrower than the hype might suggest. The pilot deployments currently being tested, … , are commercially managed fleets constrained to well-mapped areas with clear weather, wide streets and moderate traffic.”

Autonomous driving should only be approved for restricted environments and restricted environmental conditions – such as those described above. When an autonomous vehicle leaves its restricted environment or encounters conditions for which it is not approved, it should park and switch to manual control.

Jesse also wrote:

“Such applications have lower safety requirements than unrestricted driving. Unrealistically high standards would jeopardize AVs’ substantial benefits in the domains where they’re just becoming viable.”

I think Jesse meant “Such applications have fewer safety hazards to mitigate than unrestricted driving”. Safety requirements should be uniformly strong across all approved contexts.

With a detailed description of safe contexts, a consumer can decide which AVs, if any, suit their driving needs. Contrast this with SAE human involvement levels 2 thru 4, which say “ALWAYS be ready to deal with unpredictable failures”.

brad

Mon, 2018-04-30 16:22

Permalink

Who designs

It is of course silly to say that programmers can't design interfaces. Rather, what you want to say is that there are additional skills required, the designer needs to know how it works but other factors too.

I do not approve of a "two level" framework because they are not levels. Driver assist and self-drive are, in my view, two different technologies that do some similar things. An Escalator is not a different level of people mover from an elevator. A calculator not a different level of calculating machine as an abacus or slide rule.

There is hype, but the people building the tech know what they are building. At least on the serious teams.

Add new comment