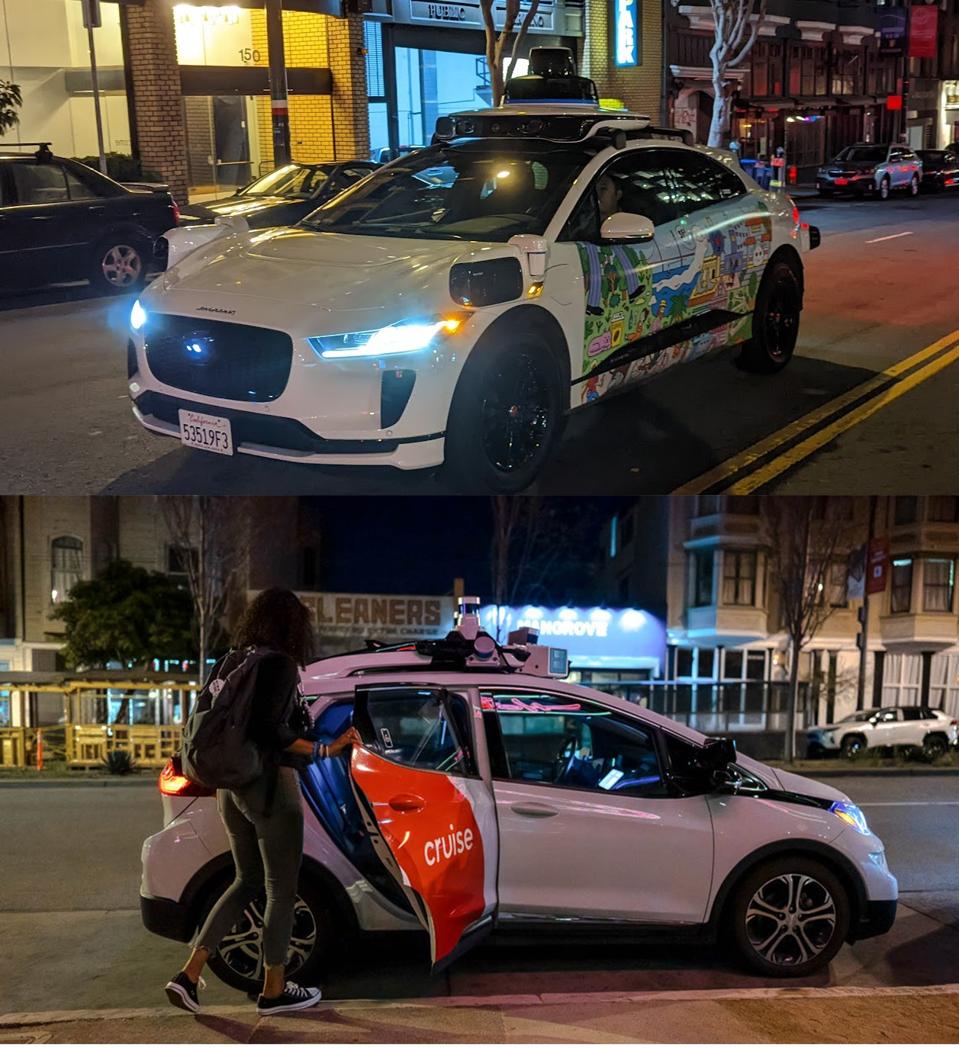

Transit Study Reveals Robotaxis Causing Surprisingly Little Disruption On Streets

Submitted by brad on Mon, 2023-04-10 16:22

San Francisco Muni decided to study problems caused by robotaxis, possibly hoping to gather data to oppose them. Instead, their data shows they are doing amazingly well and should end the debate.

Read more at Forbes.com in Transit Study Reveals Robotaxis Causing Surprisingly Little Disruption On Streets